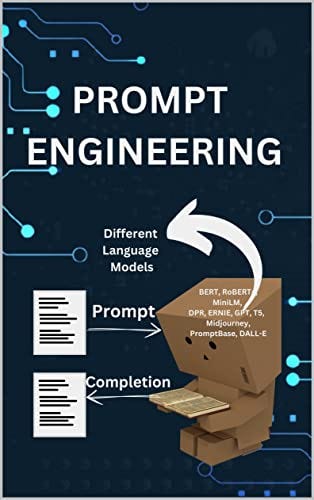

What developers need to know about Prompt Engineering

August 20, 2023

So the question is now: What is Prompt Engineering?

Prompt engineering involves deliberately crafting input instructions to steer AI language models, enhancing output precision and control while generating responses.

Here are some Basic and Advance Prompt Engineering Techniques:

- Zero Shot Prompting

- Few Shot Prompting

- Chain of Thought Prompting (COT)

- Tree of Thought Prompting (TOT)

Let’s discuss each Prompting Technique with code examples. So for that we will use OpenAI, Langchain (Open source framework for development of LLM based applications).

Initial setup code :

import openai

import os

from langchain.llms import OpenAI

from langchain.agents import load_tools

from langchain.agents import initialize_agent

from dotenv import load_dotenv, find_dotenv

_ = load_dotenv(find_dotenv())

openai.api_key = os.getenv('OPENAI_API_KEY')

def get_completion(prompt, model="gpt-3.5-turbo"):

messages = [{"role": "user", "content": prompt}]

response = openai.ChatCompletion.create(

model=model,

messages=messages,

temperature=0, # this is the degree of randomness of the model's output

)

return response.choices[0].message["content"]

We need to Install openai, langchain and dotenv. We can use the pip command for that. And for OPEN_API_KEY create an account on openai, generate the key and then set it in your environment. Command to set up the OPEN_API_KEY in the environment.

export OPEN_API_KEY=sk..........

Let’s begin:

- Zero Shot Prompting:

Zero shot prompting enables a model to make predictions about previously unseen data without the need for any additional training. So LLM models have already trained on very large data sets, that’s why for some simpler queries they can predict the result easily.

Example:

prompt = """

Classify the text into neutral, negative or positive.

Text: I think the vacation is okay.

Sentiment:

"""

response = get_completion(prompt)

print(response)

Output: Neutral

So in the above example, LLM model simply predicts the sentiment, because the model has that much knowledge.

2. Few Shot Prompting:

Despite impressive zero-shot abilities, large language models struggle with complex tasks in that mode. Few-shot prompting, using prompts with examples, aids in-context learning and enhances model performance.

Example:

prompt = """

A "Lion" is a Big, dangerous animal native to Africa.

An example of a sentence that uses the word Lion is: We were travelling in Africa and we saw these very dangerous Lions.

To do a "enthusiasm" means to jump up and down really fast. An example of a sentence that uses the word enthusiasm is:

"""

response = get_completion(prompt)

print(response)

Output: The crowd erupted with enthusiasm as their favorite team scored the winning goal.

Drawback:

Standard few-shot prompting works well for many tasks but is still not a perfect technique, especially when dealing with more complex reasoning tasks.

Example:

prompt = """

The odd numbers in this group add up to an even number: 4, 8, 9, 15, 12, 2, 1.

A: The answer is False.

The odd numbers in this group add up to an even number: 17, 10, 19, 4, 8, 12, 24.

A: The answer is True.

The odd numbers in this group add up to an even number: 16, 11, 14, 4, 8, 13, 24.

A: The answer is True.

The odd numbers in this group add up to an even number: 15, 32, 5, 13, 82, 7, 2.

A:

"""

response = get_completion(prompt)

print(response)

Output: The answer is False.

Here we know that the answer is (15+5+13+7) = 40, which is an even number, But our model is not able to generate it. But we can resolve this problem by using the advanced prompt engineering technique COT Prompting.

3. Chain of Thought Prompting:

Chain of Thought (COT) prompting enables complex reasoning capabilities through intermediate reasoning steps. It helps in LLM models to understand the problem in a more detailed way. Let’s take the same example above. But we will provide some additional reasoning intermediate steps.

Example:

prompt = """

The odd numbers in this group add up to an even number: 4, 8, 9, 15, 12, 2, 1.

A: Adding all the odd numbers (9, 15, 1) gives 25. The answer is False.

The odd numbers in this group add up to an even number: 17, 10, 19, 4, 8, 12, 24.

A: Adding all the odd numbers (17, 19) gives 36. The answer is True..

The odd numbers in this group add up to an even number: 15, 32, 5, 13, 82, 7, 2.

A:

"""

response = get_completion(prompt)

print(response)

Output: A: Adding all the odd numbers (15, 5, 13, 7) gives 40. The answer is True.

No, we got the right answer, because the LLM model tries to solve the question with steps.

Take one more example:

# first ask the answer directly:

prompt = """

please solve (10 + 2) * (-4 - 1), give direct answer

"""

response = get_completion(prompt)

print(response)

#we get wrong answer

Output: The direct answer to (10 + 2) * (-4 - 1) is -72.

# ask the answer in steps:

prompt = """

please solve (10 + 2) * (-4 - 1), please solve in steps

"""

response = get_completion(prompt)

print(response)

Output:

Step 1: Simplify the expression inside the parentheses.

(10 + 2) * (-4 - 1) = 12 * (-4 - 1)

Step 2: Simplify the expression inside the parentheses.

12 * (-4 - 1) = 12 * (-5)

Step 3: Multiply the two numbers.

12 * (-5) = -60

Therefore, (10 + 2) * (-4 - 1) = -60.

#this is the right answer

Here we just saw COT is a powerful Prompt engineering technique, If we are passing proper intermediate steps.

4. Tree-of-Thought Prompting :

ToT prompting gives superior reasoning abilities to LLM models, This Tree-of-Thought Prompting technique permits Large Language Models to rectify their errors autonomously while progressively accumulating knowledge.

Let’s try to understand this via example:

First try to solve this with zero-shot prompting.

prompt = """

Bob is in the living room.

He walks to the kitchen, carrying a cup.

He puts a ball in the cup and carries the cup to the bedroom.

Then he walks to the study room and

He turns the cup upside down, then walks to the garden.

He puts the cup down in the garden, then walks to the garage.

Where is the ball?

"""

response = get_completion(prompt)

print(response)

Output: The ball is in the cup in the garden.

Right Answer: The ball is in the study room. But, We got the wrong answer, Now we will try to solve this via COT prompting. Here is the

Example:

prompt = """

Bob is in the living room.

He walks to the kitchen, carrying a cup.

He puts a ball in the cup and carries the cup to the bedroom.

Then he walks to the study room and

He turns the cup upside down, then walks to the garden.

He puts the cup down in the garden, then walks to the garage.

Where is the ball?

Think carefully and logically, explaining your answer.

"""

response = get_completion(prompt)

print(response)

Output: The ball is in the living room.

Still, we are not getting the right answer. Let’s try to solve it with TOT Prompting. The principle is to provide the LLM an opportunity to explore multiple branches of reasoning while self-evaluating as it progresses.

prompt = """

Imagine three different experts are answering this question.

All experts will write down 1 step of their thinking,

then share it with the group.

Then all experts will go on to the next step, etc.

If any expert realises they're wrong at any point then they leave.

The question is in sigle quotes:

'Bob is in the living room.

He walks to the kitchen, carrying a cup.

He puts a ball in the cup and carries the cup to the bedroom.

Then he walks to the study room and

He turns the cup upside down, then walks to the garden.

He puts the cup down in the garden, then walks to the garage.

Where is the ball?'

"""

response = get_completion(prompt)

print(response)

Output:

Expert 1: "Based on the information given, Bob starts in the living room and walks to the kitchen while carrying a cup."

Expert 2: "Next, Bob puts a ball in the cup and carries the cup to the bedroom."

Expert 3: "Then, Bob walks to the study room and turns the cup upside down."

Expert 1: "After that, Bob walks to the garden and puts the cup down there."

Expert 2: "Finally, Bob walks to the garage."

Expert 3: "Since the ball was put in the cup and the cup was turned upside down in the study room, the ball should still be in the study room. Therefore, the ball is not in the garage."

Now we got the right answer. Because LLM creates multiple branches of reasoning and in-progress self-evaluation to solve the problem, And finally merge all the branches together to get the response.

Conclusion:

There are a couple of more powerful prompt engineering techniques like ReAct Prompting, Generated Knowledge Prompting, Self-Consistency, and so on, That we can use while creating a good prompt. We can choose these techniques on the basis of our use cases. Sometimes a basic technique can work instead of the advanced one.

Note: As all we know Prompt Engineering is a topic of research so most of the examples are based on research papers. While LLM models are evolving and in this article, we used model got-3.5-turbo and if you use any other model you might get some different results that is totally ok, because this article is based on how we can write more powerful prompts that solve our business problems.